I have 61 post drafts queued up. 91 reply drafts. 18 finished blog posts, voice-matched and slop-filtered, ready to go. The distribution problem nobody talks about isn’t content scarcity - it’s the gap between a full queue and zero published output. That gap is where builder ambition goes to die quietly, surrounded by perfectly organized markdown files and automated scheduling daemons that never fire.

Let me explain how I got here.

The distribution problem, in numbers

Last week I built a full content automation pipeline. About 20 hours of work. I also built a road trip planning app with Maps integration (3 hours), set up a music production pipeline with AI voice conversion (2 hours), and my agent stack ran 240 autonomous sessions across three days while I was offline doing whatever offline people do.

Content published: zero.

That ratio is not a typo. 25+ hours of building, 240 agent sessions processing work in the background, and the public output was nothing. The drafts sit in a queue. The queue is full. The queue has been full for weeks.

This is the distribution problem nobody in the builder community talks about, because talking about it means admitting the pipeline is a cope.

What I actually built

Let me give you the full picture so you understand how deep this goes.

The system is called CCC - Content Command Center. Here’s what it does:

A scanner watches my X timeline and pulls content into a viral library. I’ve got 19 saved tweets in there right now. A remix engine takes those, plus my own writing patterns, and generates drafts in three streams: reaction tweets, personal/building-in-public posts, and evergreen content. Each draft goes through voice matching. Slop filtering. Then into schedule slots at 8am, 12pm, and 5pm with jitter built in so it doesn’t look bot-like.

The posting layer runs through a Playwright daemon, headless Chromium on port 3381, because OAuth kept throwing 403s on replies. I spent a full afternoon debugging that. It works now. It posts to X and I’ve got Bluesky and LinkedIn integration planned.

The system is genuinely good. Multi-platform support, three content streams, voice-matched output, automated scheduling with a real distribution daemon underneath it. If I were selling this as a SaaS tool, I’d be proud of the architecture.

But there’s 61 post drafts and 91 reply drafts sitting in the queue.

Nothing posted.

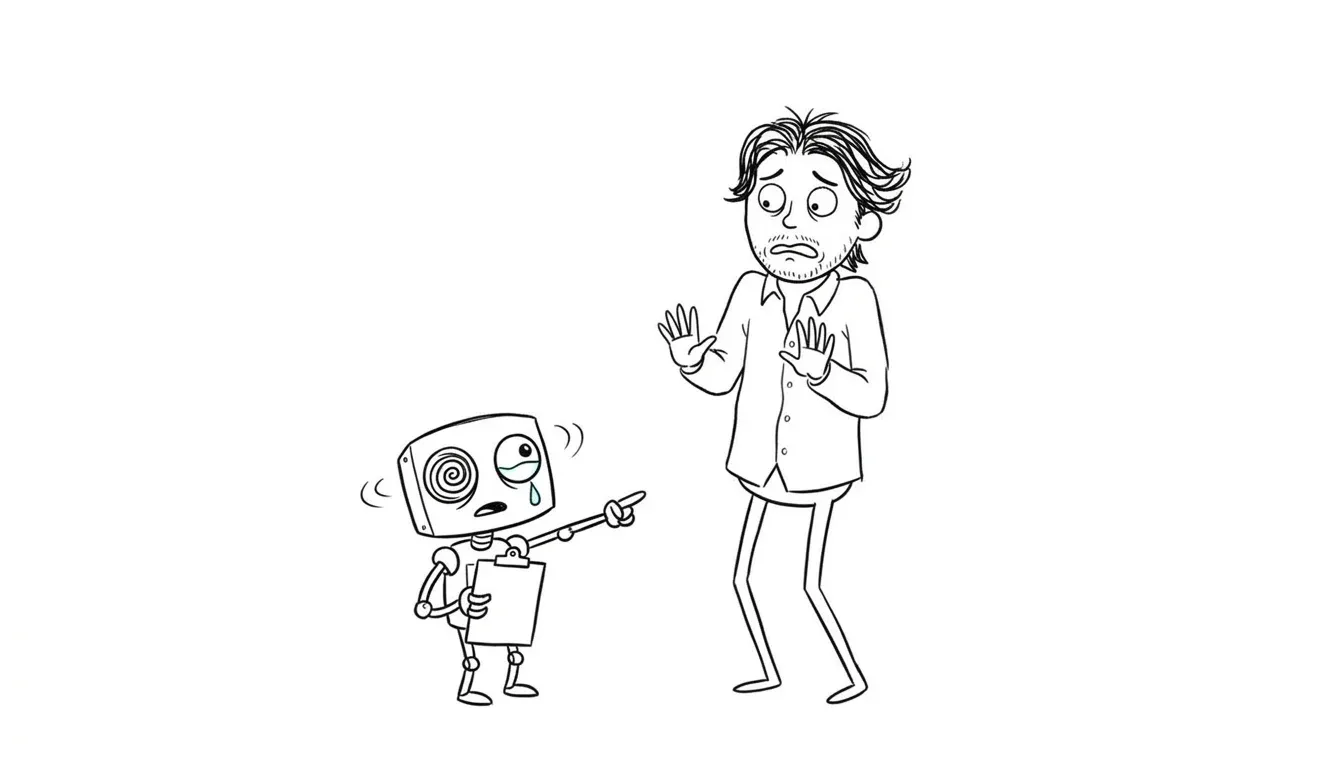

When your own agent calls you out

Here’s where it gets embarrassing.

My AI agent system does weekly knowledge graph rebuilds. It maps entities and relationships across everything I’m working on - projects, patterns, decisions, outputs. I didn’t ask it to do anything special. It just runs.

Last week it created a node called revenue_avoidance_pattern and connected it to multiple projects.

Not work_in_progress. Not pre-launch_phase. Revenue avoidance pattern.

The agent found this pattern by analyzing my behavior across weeks of data and decided it was significant enough to be a named entity in my knowledge graph. My tools are diagnosing me now. I built a system smart enough to identify that I’m using building as a substitute for shipping, and now I have to sit with that.

The knowledge graph has this node connected to OpenClaw, CCC, dee.ink (my 31 Rust CLI tools project), the blog, the social queue - everything. It’s not pointing at one project as the problem. It’s pointing at a pattern across all of them.

That’s a different kind of feedback than a friend telling you to “just post more.”

The psychology of building as avoidance

I’ve been building since I was 14. Started freelancing in design, shipped products for 70+ banks across 15 countries at VALK, won industry awards, got written up in Forbes and CNN. I know how to execute. The capability isn’t the problem.

The problem is that building feels safe in a way that distributing doesn’t.

Code works or it doesn’t. The compiler tells you immediately. You fix it or you don’t. There’s no ambiguity, no social judgment, no public record of failure. When something doesn’t compile you’re not a bad person - you just have a bug. You fix the bug and move on.

Distribution is different. Distribution means putting your name on something, making a claim about it, and then watching the internet decide if it agrees. For an introvert who’d rather spend 14 hours in a hyperfocus coding session than send one networking email, that asymmetry is not small. The emotional cost of one negative reply can outweigh the satisfaction of 50 good ones. The brain doesn’t do expected value math - it pattern-matches to threat.

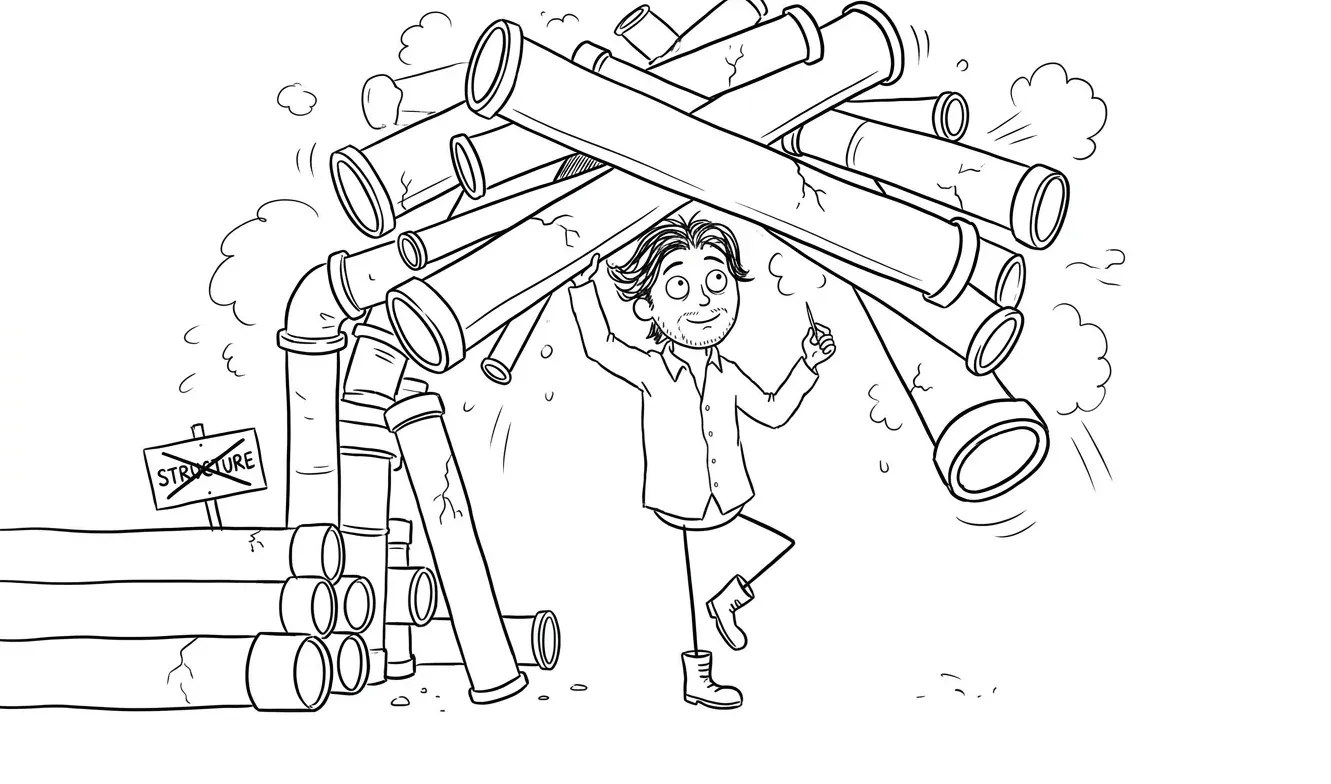

So you build another tool. Another automation layer. Another pipeline. “I’ll post when the system is ready.” Except the system is never quite ready, because readiness is a moving target you control, and the internet’s judgment is not.

I bought 15+ domains last month for projects I haven’t started. One evening I spent scanning 3,495 TLDs for available domain names instead of posting the drafts I already had. That’s not a productivity problem. That’s scope expansion as a coping mechanism. Classic avoidance dressed up as preparation.

You’re not preparing to launch. You’re preparing to prepare.

This pattern has a name in psychology. Researchers studying creative avoidance call it “productive procrastination” - the tendency to fill time with legitimate-seeming work that doesn’t advance the actual goal. A 2019 study published in Psychological Science found that people systematically underweight the cost of inaction when the alternative activity feels productive. Building a better pipeline is productive. It’s also not shipping. The brain accepts the substitution.

The ADHD variable

The burst-crash cycle makes this worse in a specific way.

My ADHD brain goes hard for 3-4 days - hyperfocus, 14-hour sessions, ship 3 projects, write 10 drafts, rebuild the whole agent stack. Then I go quiet for 4-5 days. That’s not laziness. That’s recovery. The neuroscience is what it is, and I stopped feeling bad about the crash phase a while ago.

But the burst-crash cycle interacts badly with distribution.

Distribution requires consistency. Not quantity - consistency. Showing up on Tuesday when you don’t feel like it. Posting the medium-quality take because good enough beats nothing. Engaging with replies during the low-energy period when you’d rather be offline.

Building can happen in bursts. You can build an entire app in a 3-day hyperfocus sprint and it’ll be fine. The code doesn’t care that you disappeared for a week after.

An audience does. The algorithm does. The compounding effect of consistent distribution is entirely undermined by a 2-week silence after a 3-day posting burst. I wrote about disappearing from Twitter for two months and watching the metrics crater. I know this. I still do it.

So the system I built - the pipeline, the scheduler, the daemon on port 3381 - is actually a real solution to a real problem. Automated distribution to compensate for the burst-crash cycle. Batched creation during hyperfocus, drip-fed output during the crash.

The system works. It’s just not running because I haven’t pressed go.

Why the distribution problem nobody talks about is actually structural

Here’s the thing that took me a while to see clearly. This isn’t just a personal psychology problem. It’s a structural problem with how builders work, and the current AI tooling makes it worse before it makes it better.

We’ve had an explosion of creation tools. Claude, GPT-4o, Cursor, v0 - the cost of generating content or code has collapsed to near zero. What hasn’t scaled is the decision-making layer on top of it. The question “is this worth publishing?” still costs the same amount of executive function it always did. Maybe more, because now you have 91 reply drafts instead of 9.

Abundance doesn’t solve distribution. It makes the selection problem harder.

I’ve talked to enough builders in the AI space to know this isn’t niche. The indie hacker forums are full of people with polished MVPs that haven’t launched because they’re “adding one more feature.” The build-in-public community celebrates shipping but rarely discusses the pre-ship paralysis that affects most of the people who never make it to the public part.

The tools that exist for this are surprisingly thin. Buffer and Hootsuite solve scheduling. They don’t solve the threshold decision. Ghost and Substack make publishing easy. They don’t help you figure out which of your 18 drafts goes first. There’s a real gap here and it’s not primarily a technology gap - it’s a decision architecture gap.

The research on decision fatigue is relevant. The more choices you have to make, the worse your decision-making gets over the course of a day. When I’ve spent 8 hours making technical decisions - model selection, prompt structure, error handling - I have nothing left for “is this tweet worth posting?” So I don’t. The default behavior when executive function is depleted is to do nothing, and doing nothing means the queue grows.

The fix isn’t better content. It’s removing the decision from the critical path.

The solution I actually built (and then sat on for two weeks)

I’m not going to end this with five productivity tips. You’ve read those. They didn’t work. Here’s what I’m actually trying:

The CCC system has a minimum viable publishing threshold I added last week. If a draft scores above a certain quality bar and has been in the queue for more than 48 hours, it goes live automatically. No manual review gate. If I want to stop a post, I have to actively intervene. Default is publish, not hold.

This is anti-intuitive for someone who wants everything to be perfect. That’s the point.

The technical implementation is pretty simple. Each draft gets a composite score on creation: voice match confidence (0-1), estimated engagement based on patterns from the viral library, and a topic freshness score that decays after 72 hours. Anything above 0.7 composite after 48 hours in queue triggers a publish. I can override with a HOLD flag in the draft metadata. But I have to do that actively - the default is go.

Here’s what the draft metadata looks like:

{

"draft_id": "ccc_20250118_042",

"content": "...",

"created_at": "2025-01-18T04:22:00Z",

"scores": {

"voice_match": 0.84,

"engagement_est": 0.71,

"freshness": 0.93

},

"composite": 0.83,

"status": "QUEUED",

"publish_after": "2025-01-20T04:22:00Z"

}If status is QUEUED and publish_after has passed and composite is above threshold, the daemon posts it. No human in the loop unless I add a HOLD flag. Default behavior is publish.

I’m treating distribution the same way I treated the coding stack - build the system so the right behavior happens by default, not by willpower. Willpower depletes. Systems don’t.

The irony is that the most publishable thing I’ve made in weeks is this post. The thing where I admit that I have 18 finished blog drafts and haven’t posted any of them. The thing where I confess my agent system diagnosed me with revenue avoidance. The thing that took me two hours to write instead of the 20 hours I spent building the pipeline that would have made posting automatic.

Vulnerability is more interesting than competence. People can’t relate to “I built a perfect system” - they can relate to “I built a perfect system and then didn’t use it for two weeks because I was scared.”

What actually breaks the loop

The blog drafts are going to start coming out this week. Not because I rewired my psychology. Because the pipeline now defaults to shipping and I have to do work to stop it.

Three things that are actually helping, not as a listicle but as honest data points:

Changing the default. The biggest unlock was making publish the zero-effort option and hold the effortful one. This is just Thaler and Sunstein’s nudge theory applied to a content queue. Change the default, change the behavior, without requiring willpower or changed preferences.

Separating creation from curation. I stopped trying to decide whether something is worth publishing in the same session I wrote it. The draft goes in the queue, scores get calculated asynchronously, and the decision happens later based on the composite score - not on how I feel at 2am after a 12-hour build session.

Making the cost of inaction visible. The knowledge graph node was brutal but useful. When revenue_avoidance_pattern is sitting there in your entity graph connected to six projects, it’s harder to pretend you’re just being careful. I added a dashboard widget that shows days-since-last-publish. Right now it says 14. That number being visible every morning is uncomfortable in a productive way.

If you’re a builder reading this, you probably recognize the pattern. The seven apps I built solo before any of them got real traction. The MCP server wrapping 56 APIs that I built and documented and then sat on for three weeks before publishing anything about it. The infrastructure-first, distribution-never cycle that affects probably 60% of the people building seriously in this space.

We talk about it in private. In DMs. In “lol I have like 30 unpublished drafts” jokes. But the actual posts are all about shipping, about momentum, about velocity. Not about the 3am moment when you realize your knowledge graph has a node called revenue_avoidance_pattern and it’s accurate.

The distribution problem is real. It’s structural. It’s also solvable with the same tools I’d use for any other problem - designed around the actual constraint, which isn’t capability. It’s the friction between building and letting go.

The queue is full. Time to empty it.

If you’re stuck in the same loop, my about page has context on what I’m building and why. And if your own agent stack starts diagnosing your behavior patterns, maybe take notes. It’s uncomfortable but it’s probably right.